Waking up in the morning I look out of the window. A street scene. A well-maintained road. Houses and the greenery of their gardens. Parked cars and flowering shrubs. I hear muted traffic noise from the main road. Birds and the wind rustling through the tall trees.

I didn’t make these or ask them to be there, but I did choose to live on this residential street. In everyday experience there’s a million and one aspects of life that I take for granted. These surroundings have evolved, or should I say developed. Flicking back the calendar, there was a transformational moment. There was a time before this built environment when this area was open fields, hedges, and trees. A rustic farmed landscape.

Systematically, a local authority gave permission for the development of this residential area back in the 1950s. Generally, what they delivered has passed the test of time. The infrastructure works. Notwithstanding the propensity to dig-up the pavements and roads when it doesn’t.

In taking our surroundings for granted there’s not much thought given to the transformational moment that produced this tranquil scene of urban peacefulness. Yet, it was key to what happened for the next 70 years.

Like it or not, a paper based bureaucratic process involving and engaging the councilman and councilwoman of the town and motivated private builders produced this urban setting. Public and private interests working together.

Compare and contrast how our society is making the digital environment that we now inhabit. I could say that it’s not making it at all but rather letting it happen. As an illustration of how strange the transformation impacting us all, I got an e-mail with this intriguing line:

This is an operational email required for your ABC account to function properly and cannot be unsubscribed from.

Here’s an interesting digital imposition in my inbox. I don’t want this ABC account. I thought I’d deleted it. Yet, it’s provider politely tells me that such emails can’t be unsubscribed. I assume they think that’s to my benefit in some mysterious way.

[I won’t get sniffy about west coast Americans ending a sentence with a preposition.]

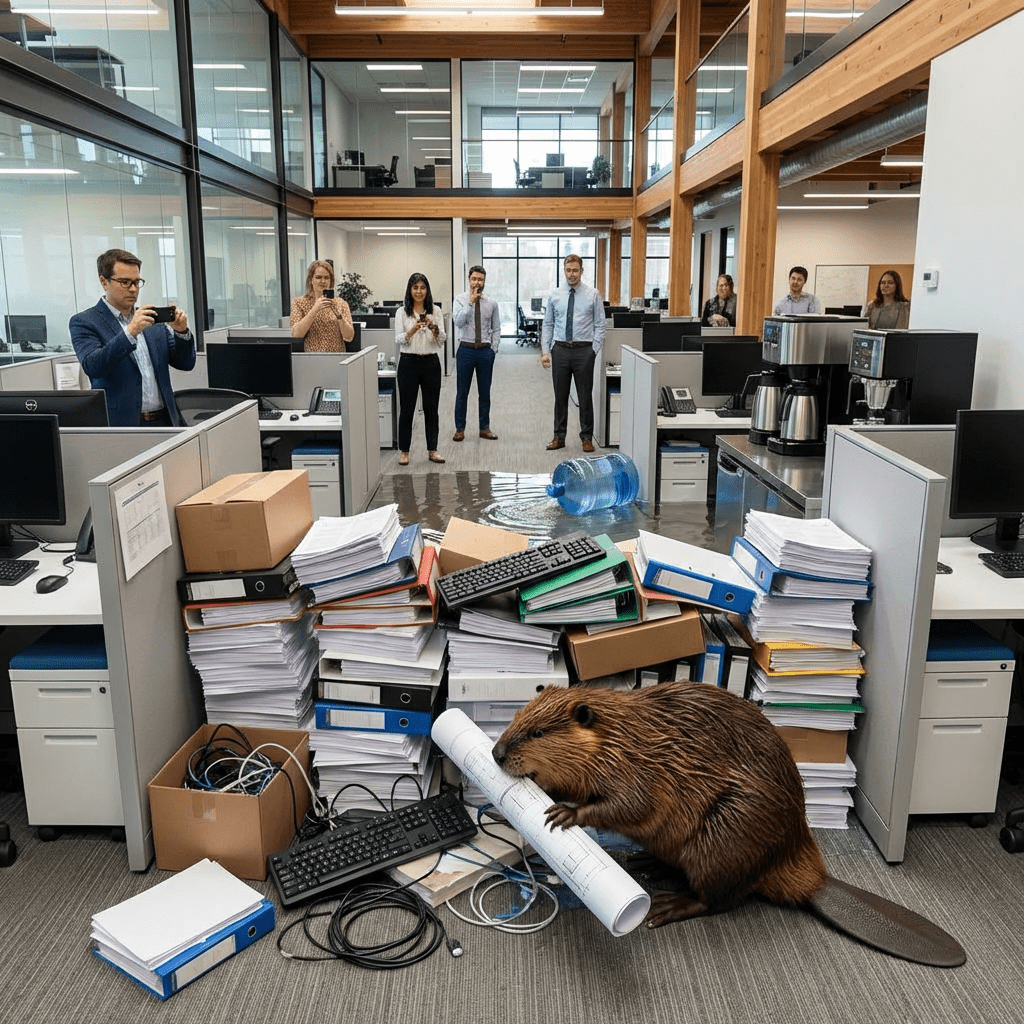

Where are my elected representatives when it comes to the regulation of the construction of our digital environment? Do the ones in my municipal, regional, or national government have any say over what happens in this fast-moving environment?

I won’t throw my hands up in horror as if there’s no one. I’m aware that there are national politicians who take an interest in the development of the digital world. Debates rage after the fact. An event occurs and an element of society’s digital transformation becomes topic for conversation. It’s all highly reactive. Our sleeping sentinels wake up when the media points out a catastrophe or some pivotal moment of transformation.

Theres little attempt for, systematically, an authority to give permission for a development or even to assert that right on our behalf. We live in a democracy where elected politicians are either asleep at the wheel or too timid to lock horns with the global digital giants.

The question I have is what kind of society will be built under these conditions?